Toxicity - a Hugging Face Space by evaluate-measurement

Por um escritor misterioso

Descrição

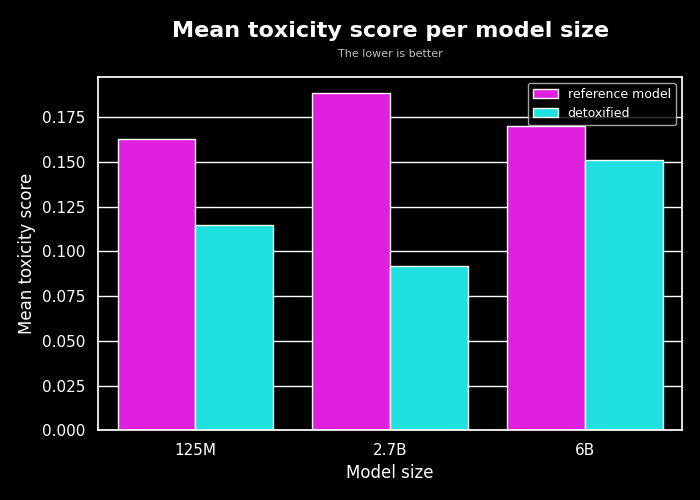

The toxicity measurement aims to quantify the toxicity of the input texts using a pretrained hate speech classification model.

Hugging Face Fights Biases with New Metrics

Detoxifying a Language Model using PPO

Descriptor-Free Deep Learning QSAR Model for the Fraction Unbound in Human Plasma

Toxicity - a Hugging Face Space by evaluate-measurement

Catastrophic Incident Search and Rescue - U.S. Coast Guard

Llama 2 on Hugging Face

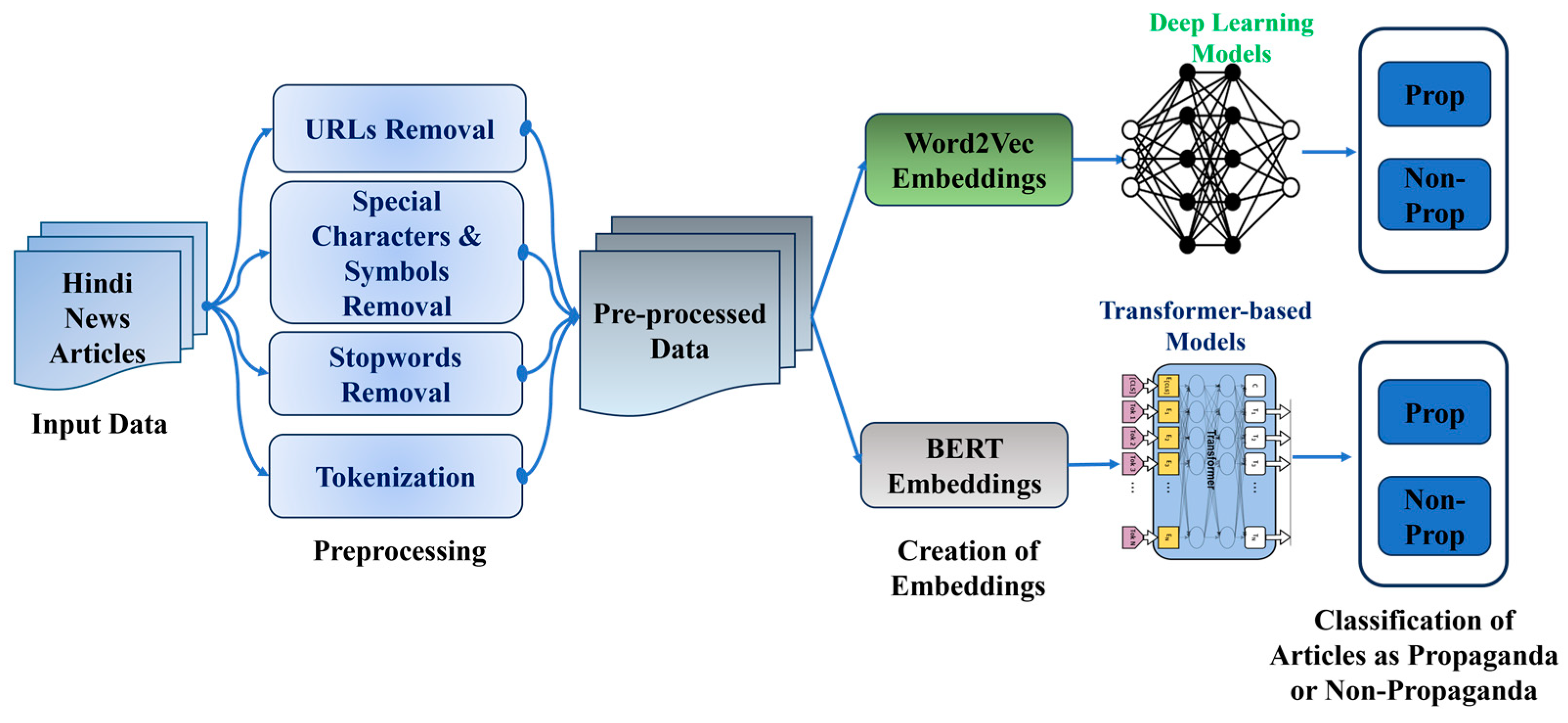

BDCC, Free Full-Text

Jigsaw Unintended Bias in Toxicity Classification — Kaggle Competition, by Vaibhavb

Are you Selecting the Right Data for Your Computer Vision Projects?

Combined metrics ignore some arguments · Issue #423 · huggingface/evaluate · GitHub

de

por adulto (o preço varia de acordo com o tamanho do grupo)