RuntimeError: CUDA out of memory. Tried to allocate - Can I solve this problem? - windows - PyTorch Forums

Por um escritor misterioso

Descrição

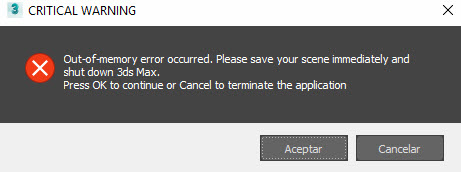

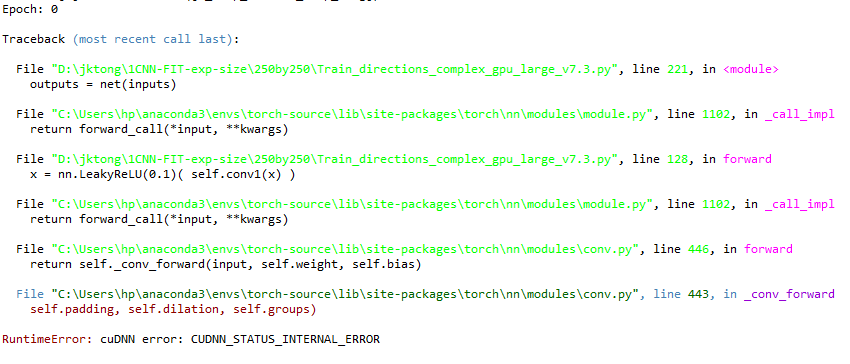

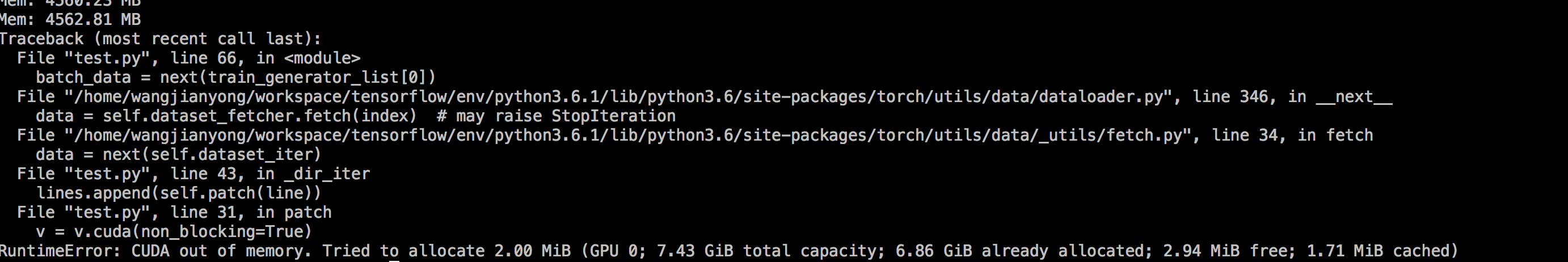

Hello everyone. I am trying to make CUDA work on open AI whisper release. My current setup works just fine with CPU and I use medium.en model I have installed CUDA-enabled Pytorch on Windows 10 computer however when I try speech-to-text decoding with CUDA enabled it fails due to ram error RuntimeError: CUDA out of memory. Tried to allocate 70.00 MiB (GPU 0; 4.00 GiB total capacity; 2.87 GiB already allocated; 0 bytes free; 2.88 GiB reserved in total by PyTorch) If reserved memory is >> allo

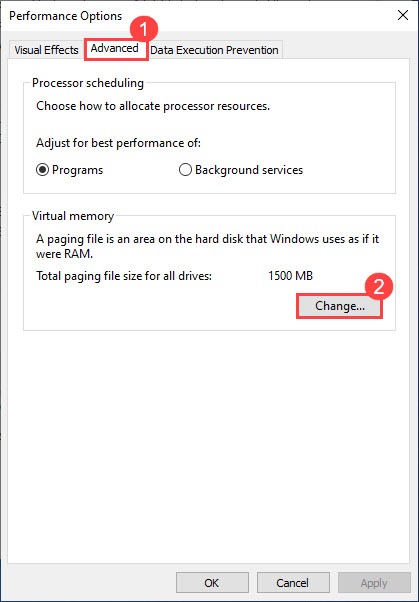

How to allocate more GPU memory to be reserved by PyTorch to avoid

DataParallel memory consumption in PyTorch 0.4 - PyTorch Forums

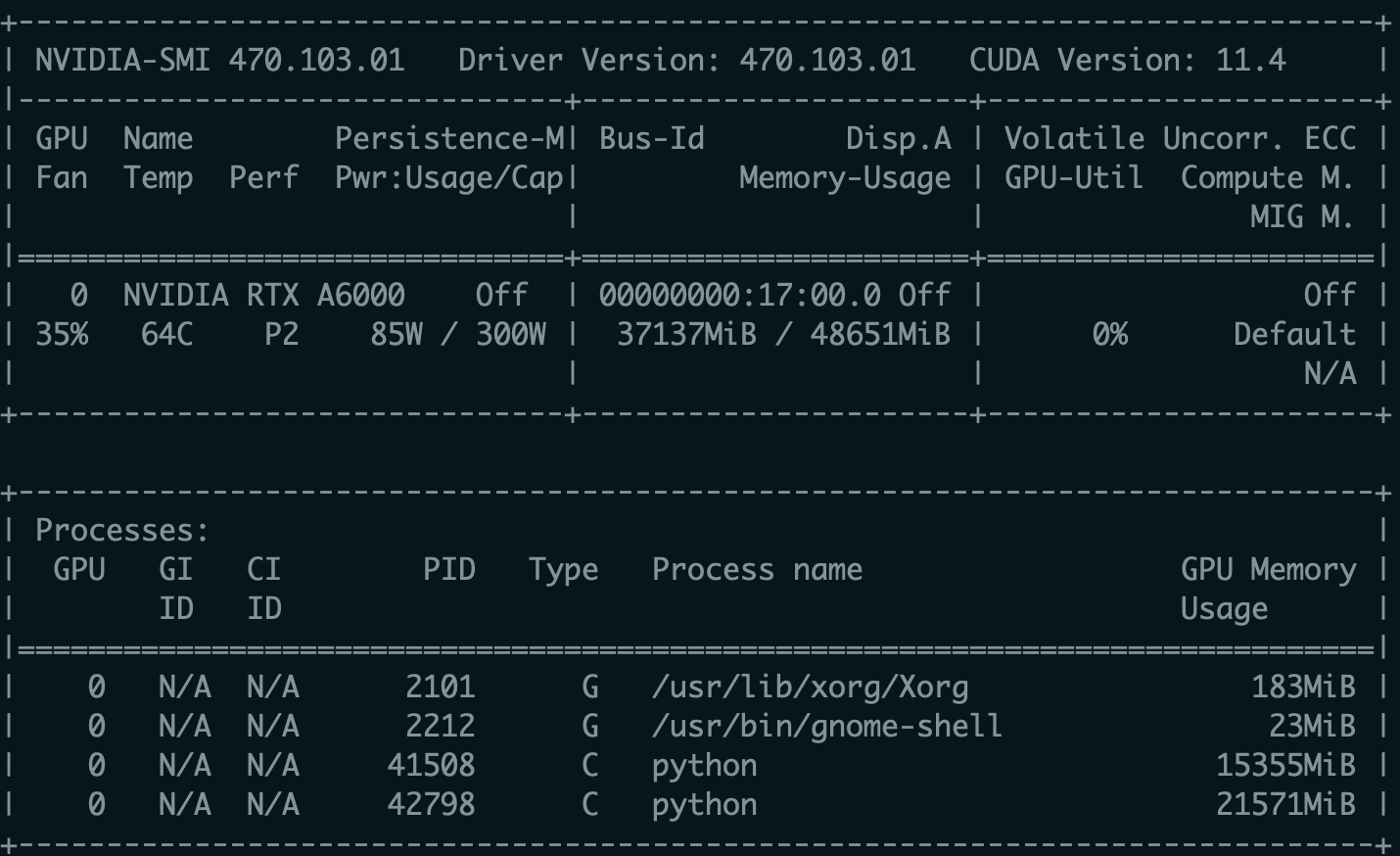

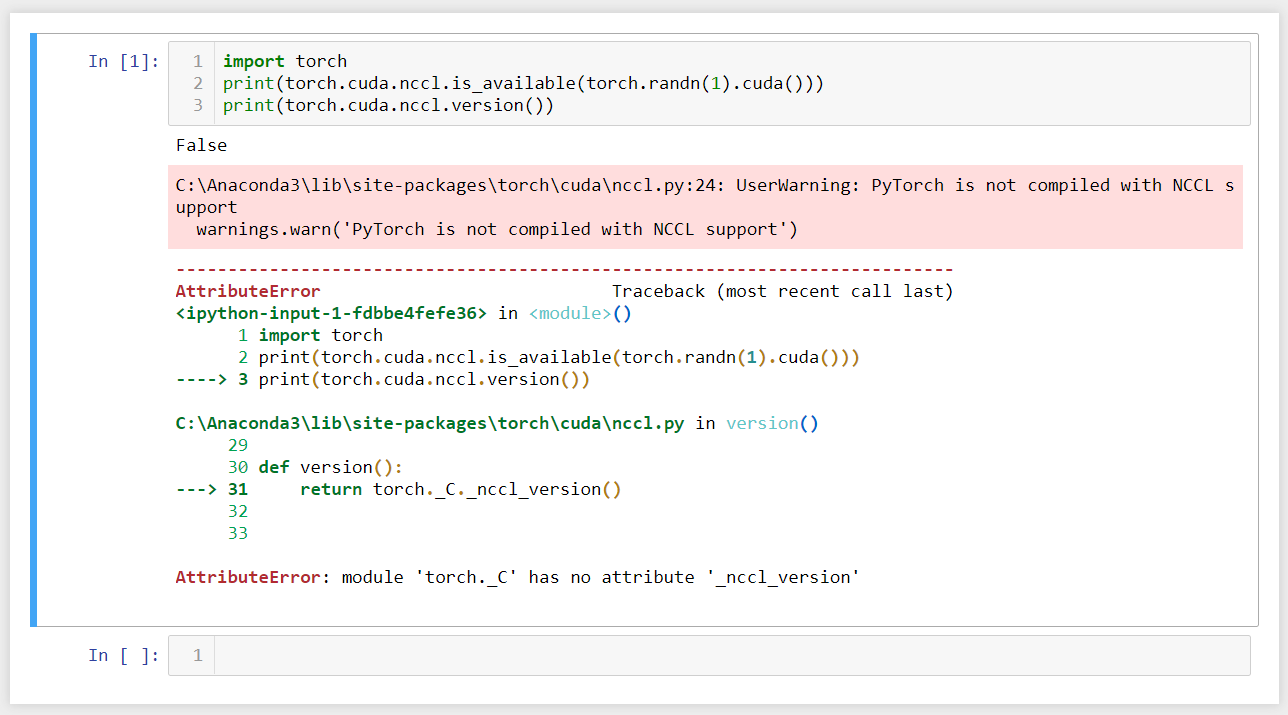

Updated) NVIDIA RTX A6000 INCOMPATIBLE WITH PYTORCH - windows

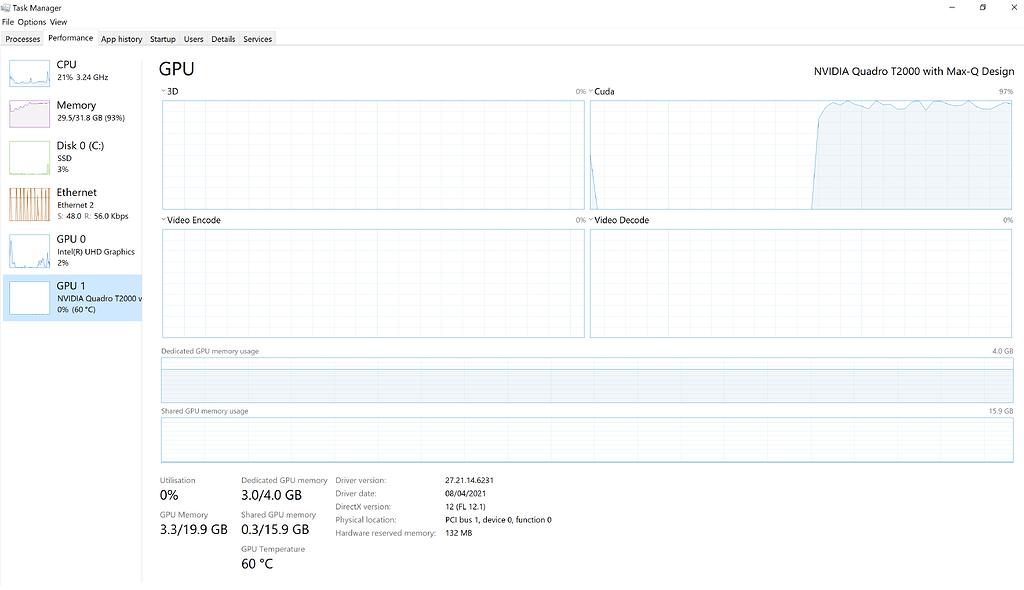

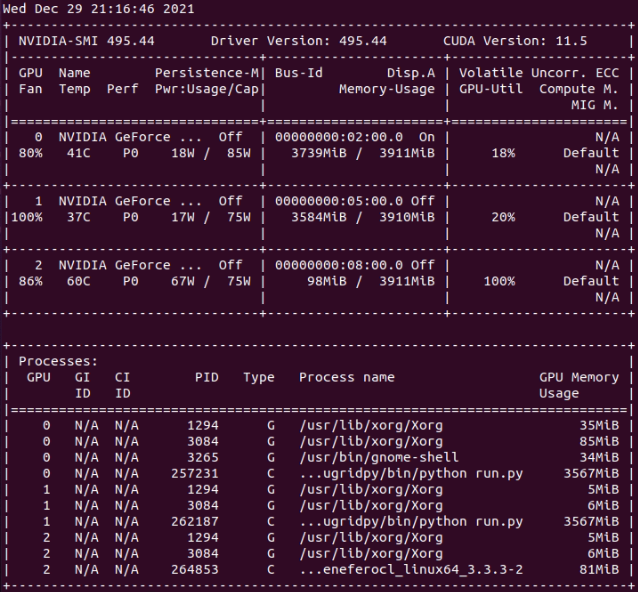

CUDA utilization - PyTorch Forums

How to resolve “RuntimeError: CUDA out of memory”?

Windows 10 Help Forums

Failing to load models due to CUDA out of memory creates unclear

How to solve gpu-memory-leak of DataLoader - PyTorch Forums

2.5GB of video memory missing in TensorFlow on both Linux and

CUDA Out of Memory on RTX 3060 with TF/Pytorch - cuDNN - NVIDIA

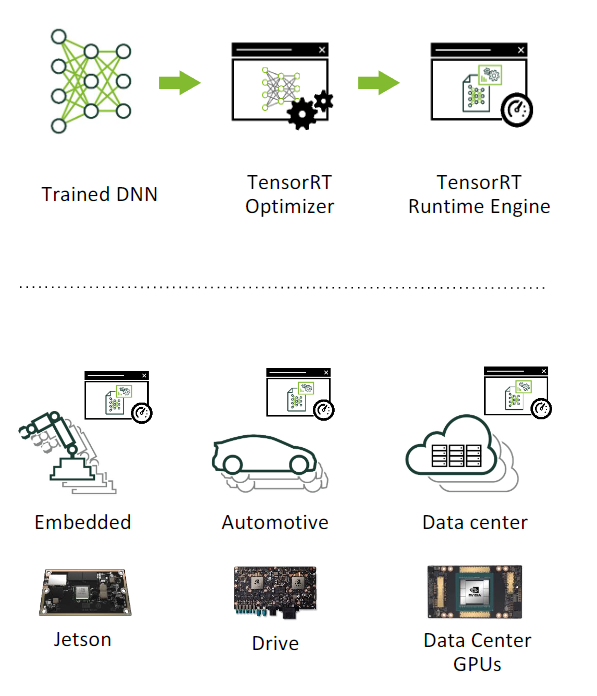

Developer Guide :: NVIDIA Deep Learning TensorRT Documentation

CUDA Out of Memory when trying to allocate 16 EiB · Issue #33143

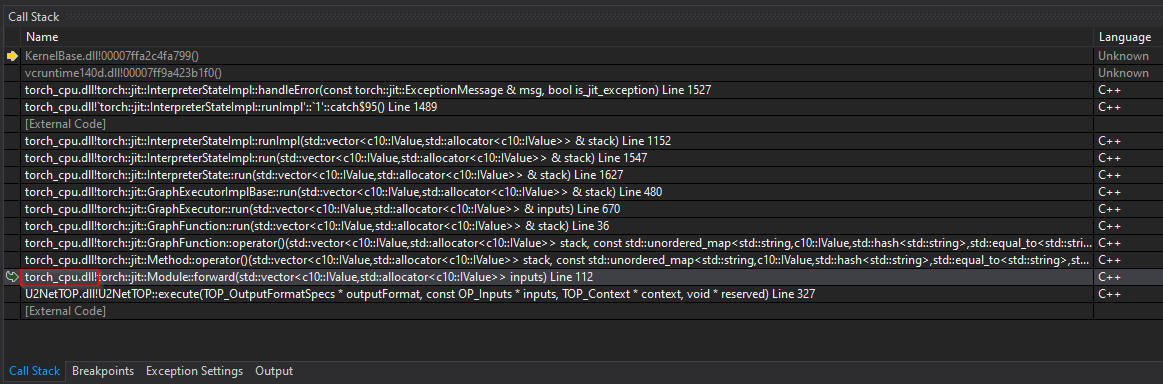

Debugging runtime error module->forward(inputs) libtorch 1.4 - jit

Experimental Python tasks (beta) - task description

de

por adulto (o preço varia de acordo com o tamanho do grupo)