Visualizing the gradient descent method

Por um escritor misterioso

Descrição

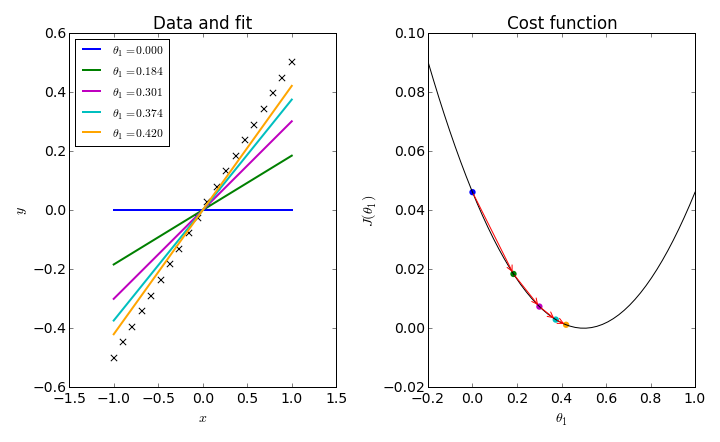

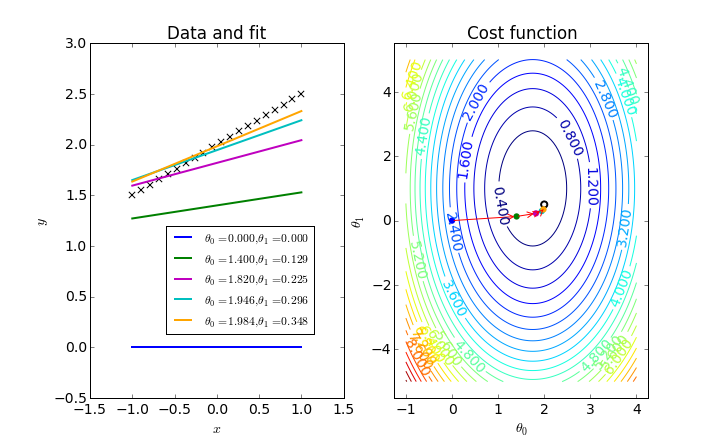

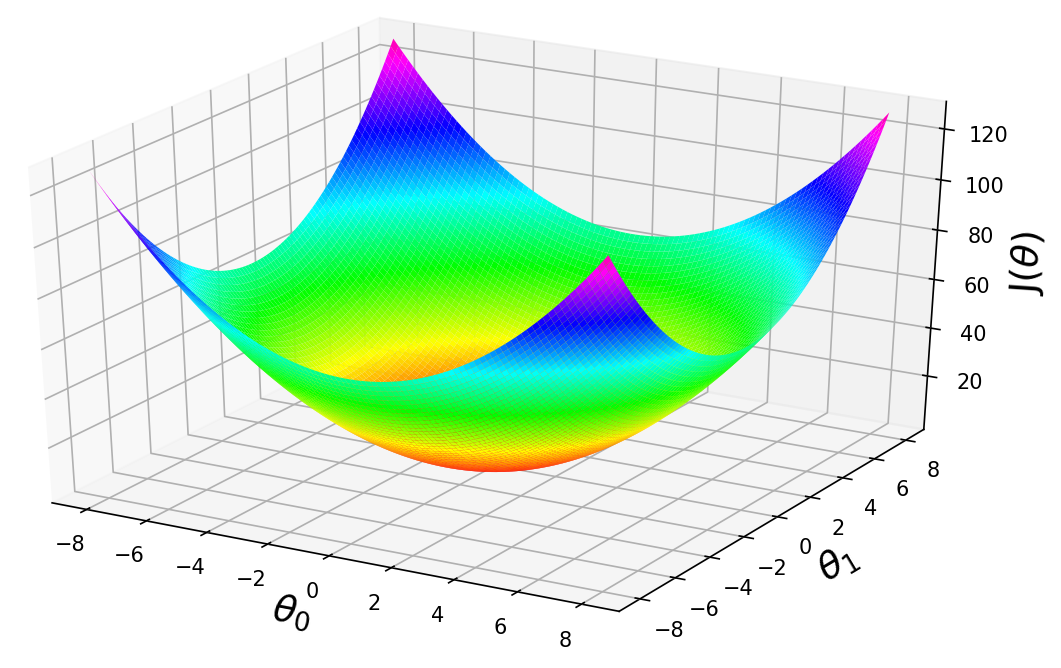

In the gradient descent method of optimization, a hypothesis function, $h_\boldsymbol{\theta}(x)$, is fitted to a data set, $(x^{(i)}, y^{(i)})$ ($i=1,2,\cdots,m$) by minimizing an associated cost function, $J(\boldsymbol{\theta})$ in terms of the parameters $\boldsymbol\theta = \theta_0, \theta_1, \cdots$. The cost function describes how closely the hypothesis fits the data for a given choice of $\boldsymbol \theta$.

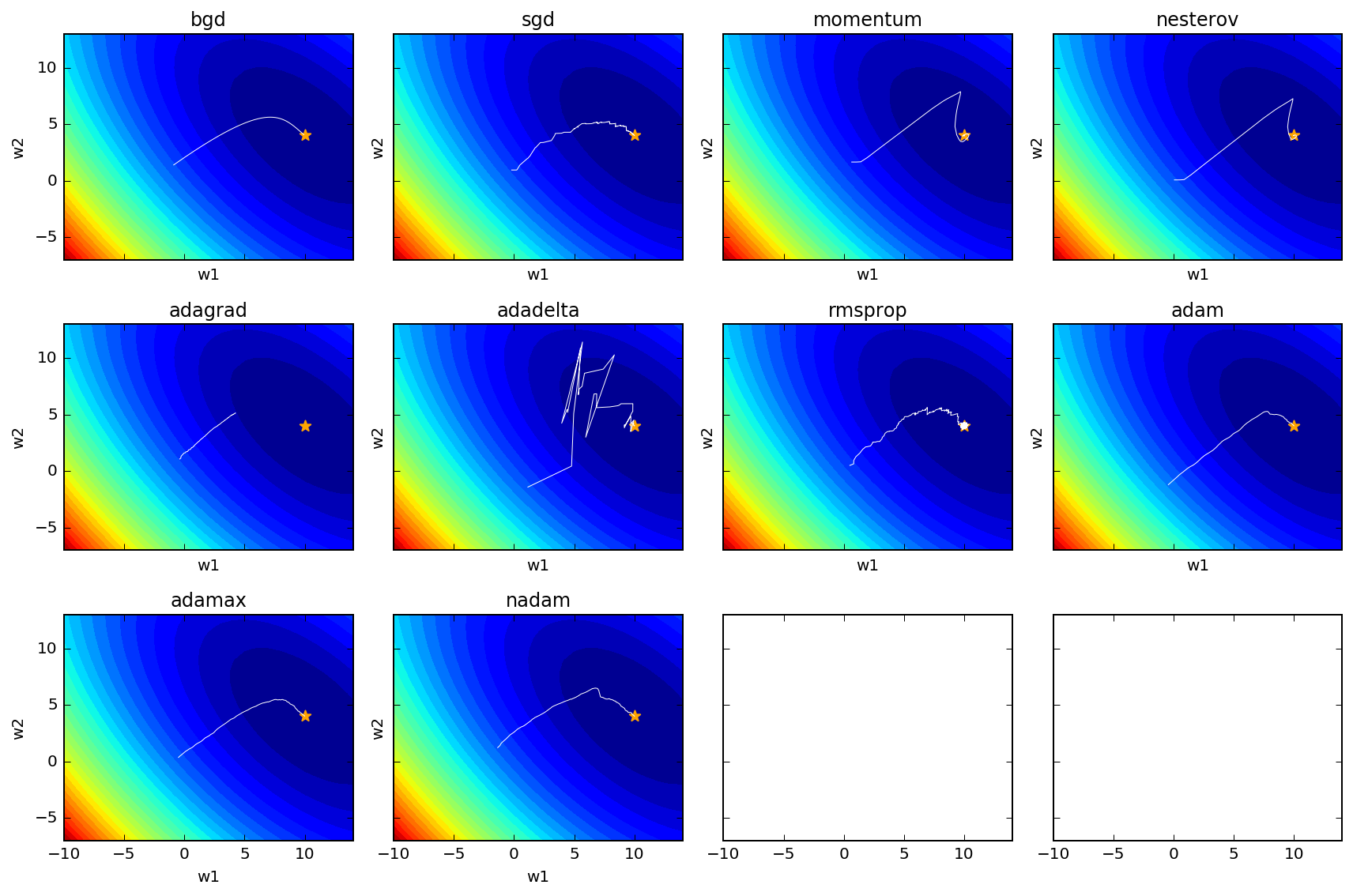

A Visual Explanation of Gradient Descent Methods (Momentum, AdaGrad, RMSProp, Adam), by Lili Jiang

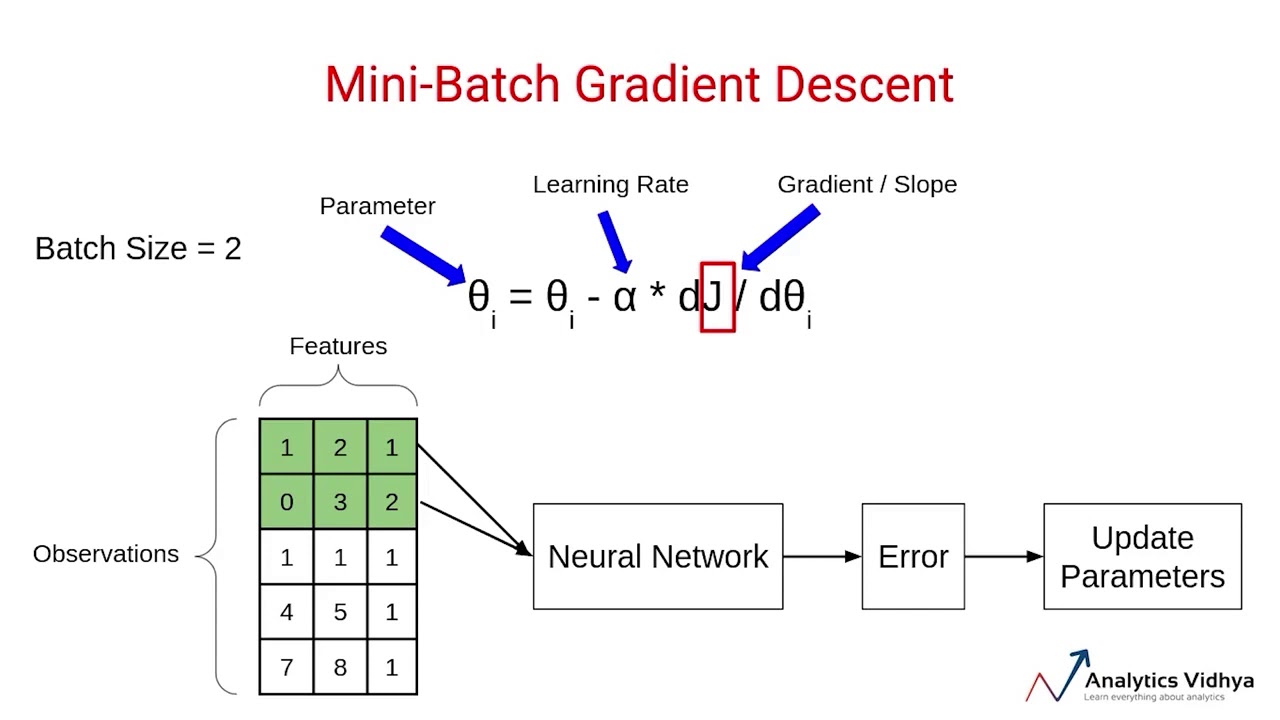

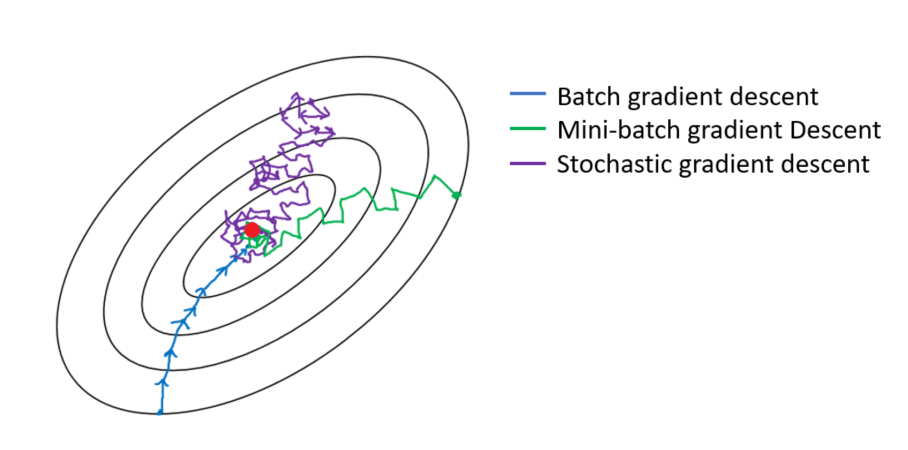

Variants of Gradient Descent Algorithm

Why Visualize Gradient Descent Optimization Algorithms ?, by ASHISH RANA

Gradient-Based Optimizers in Deep Learning - Analytics Vidhya

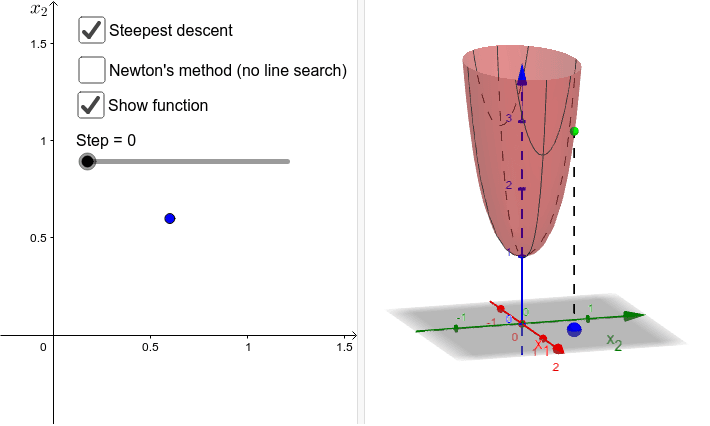

Visualize various gradient descent algorithms

Simplistic Visualization on How Gradient Descent works

Stochastic Gradient Descent (SGD): A New Way to Visualize This Beauty, by Ketan Suhaas Saichandran

Visualizing the gradient descent method

How to visualize Gradient Descent using Contour plot in Python

Why Visualize Gradient Descent Optimization Algorithms ?, by ASHISH RANA

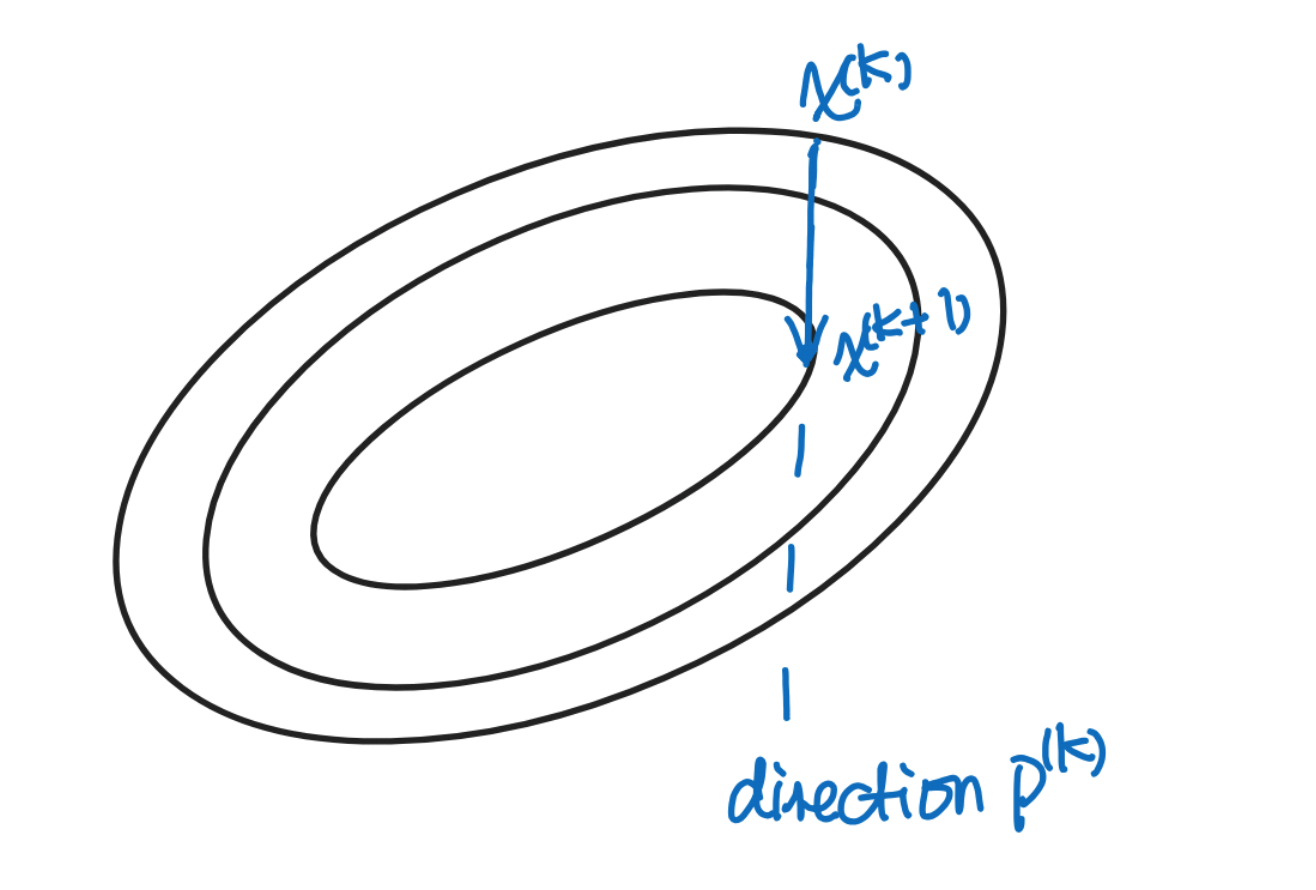

Gradient descent.

Guide to Gradient Descent Algorithm: A Comprehensive implementation in Python - Machine Learning Space

Reducing Loss: Gradient Descent, Machine Learning

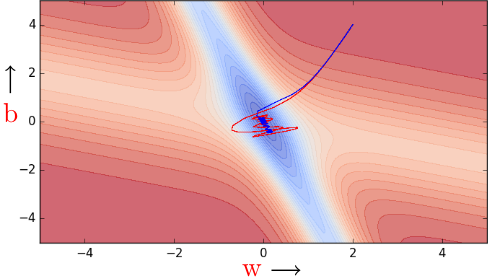

Gradient descent visualization - hills

Visualizing Newton's Method for Optimization II

de

por adulto (o preço varia de acordo com o tamanho do grupo)